The State of AI Adoption in Private Equity — Use Cases, Challenges, and Where It's Going

A comprehensive look at how private equity firms are adopting AI — from deal sourcing to LP reporting — including real use cases, key challenges, and what's next.

Key Takeaways

- AI adoption in private equity is real, but unevenly distributed. Surveys show a clear and widening split between firms that have operationalized AI in core workflows and the majority still circling pilots.

- Value is concentrating upstream first. Deal sourcing, CIM synthesis, and AI due diligence are where firms are seeing the fastest returns.

- Portfolio impact is overtaking GP efficiency gains. Leading firms are now driving material EBITDA improvement inside portfolio companies through AI — not just saving analyst hours at the fund level.

- Most AI failures are organizational, not technical. Data fragmentation, weak governance, and unclear ownership consistently outrank model quality as adoption blockers.

- The frontier is shifting from tools to agents. The operating model shift is AI agents.

- Human judgment remains the constraint and the differentiator. The firms extracting real value are designing AI as a decision amplifier for analysts, not a replacement.

Private equity has no margin for error. Exit multiples have compressed, holding periods have stretched, and LPs are demanding clearer evidence of earnings quality. In that environment, AI adoption is no longer about curiosity — it is a practical response to pressure that shows up daily in deal teams, portfolio reviews, and fundraising conversations.

The context has shifted as much as the technology:

⟶ Bain's analysis is direct: the pressure to find exits, distribute funds, and source fresh capital continues to mount. When valuation expansion stops doing the work, firms are forced to manufacture alpha through better decisions, tighter diligence, and more active portfolio management.

⟶ Deloitte's latest survey shows that more of 80% of organizations have integrated GenAI into their workflows. But adoption is uneven. A small group has embedded AI into how work actually gets done. A much larger group is still stuck with isolated tools and ad hoc usage. That gap is widening, and it is beginning to show up in outcomes.

This article maps the full picture of AI adoption for Private Equity:

- the AI adoption curve (1)

- the use cases generating real ROI today (2),

- the operational challenges most firms underestimate (3),

- PE perspectives to consider (4)

- Where the industry is heading (5).

This article is based on insights gathered from +100 private equity analysts and partners – we're sharing the tip of the iceberg!

1. The AI Adoption Curve — Where Firms Actually Stand

The rhetoric around AI in private equity now runs ahead of reality.

Most firms are experimenting. Far fewer have embedded AI deeply enough to actually change how deals are sourced, diligenced, and managed.

Adoption is broad, maturity is thin, and the performance gap between leaders and the rest is widening.

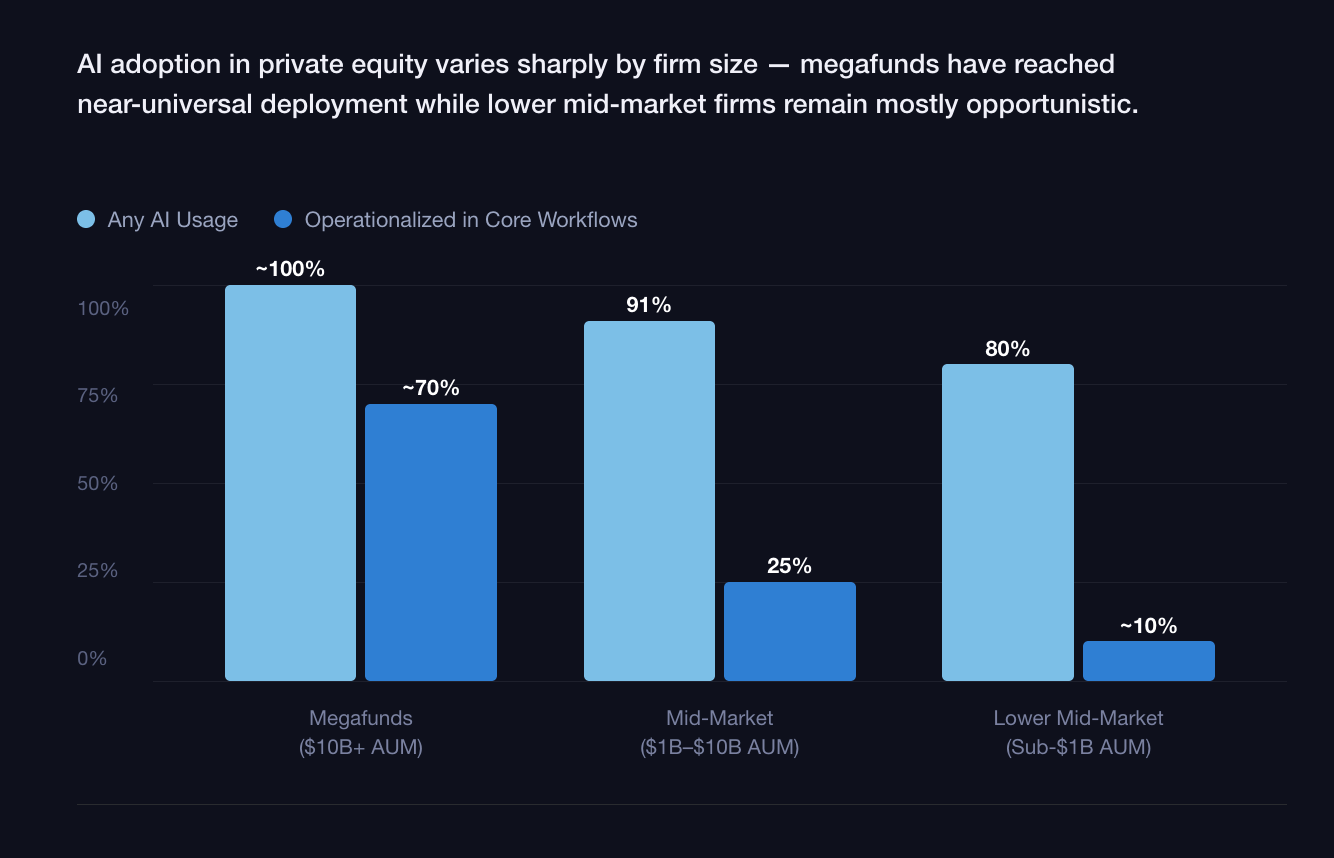

By firm size

- Megafunds ($10B+ AUM): from experimentation to infrastructure.

AI adoption has moved beyond individual tools. Large global sponsors are building centralized data platforms, internal AI teams, and governance structures that span funds and portfolio companies. 84% of these PE firms have now appointed a senior AI owner — often a Chief AI or Data Officer — with the highest concentration among large, multi-strategy firms. Blackstone alone employs more than 50 data scientists embedded directly in investment processes, with AI and analytics deployments across 70+ portfolio companies.

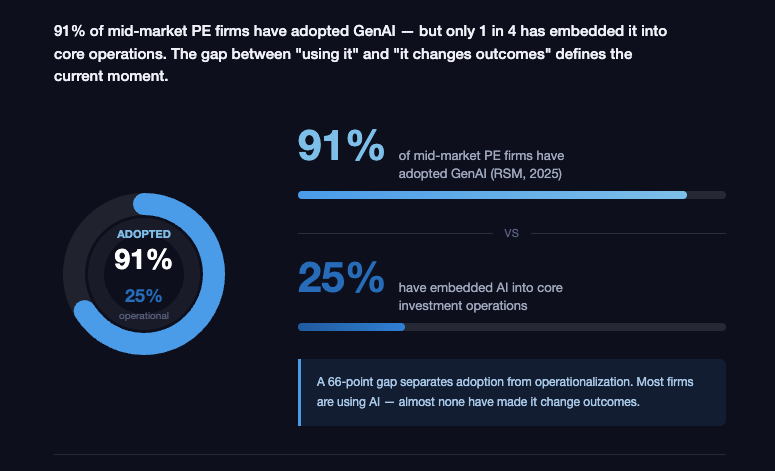

- Mid-market firms ($1B–$10B AUM): pilots everywhere, integration nowhere.

This is where activity is highest and coherence is lowest. 91% of middle-market firms have adopted generative AI — but only one in four has successfully embedded it into core operations. Deloitte confirms that a large majority report GenAI usage in pre-sign workflows, yet FTI finds that fewer than half have a formal AI strategy in place, and more than a third of those that do lack any mechanism to track impact.

- Lower middle market (sub-$1B AUM): awareness without infrastructure.

Smaller firms are highly aware of AI's potential but constrained by data quality, budget, and operating leverage. Most adoption here is opportunistic — individual analysts using general-purpose tools for research, memo drafting, or data cleanup. Central AI infrastructure investment is rare, and portfolio-level initiatives are typically deferred until ownership, not underwriting.

By maturity stage

Opportunistic use. AI lives on individual laptops. Analysts summarize CIMs, draft IC slides, sanity-check assumptions. Real productivity gain, but no institutional learning and meaningful risk around data leakage and consistency.

Structured pilots. Firms formally approve AI tools for defined use cases. According to Deloitte, roughly 40% of GenAI adopters concentrate usage in strategy, target identification, and diligence. Value exists, but usage is episodic and dependent on local champions.

Strategic integration. This remains the exception. AI is embedded into workflows and decision processes — not bolted on. Data flows are centralized. Outputs are standardized. Human review is explicit. Firms operating here are compressing screening time from days to hours, institutionalizing AI-driven diligence questions, and making firmwide knowledge instantly accessible.

The numbers that matter

- AI Investment: Total global private AI investment hit a record $252.3 billion in 2024 (and even more in 2025). Within PE, budgets for AI agents and automated research platforms are growing at a 30–50% CAGR.

- Investment per portfolio company: Leading PE firms now invest an average of $2.1M per portfolio company on AI initiatives.

- ROI on investment: Accenture reports that every $1 invested in AI transformation can deliver an annualized EBITDA uplift of 2–4x at exit — a multiplier that is beginning to show up in fund performance data.

- Readiness: The uncomfortable truth, however, is consistency. FTI reports that while a majority of PE executives expect AI to drive meaningful value creation within 18 months, less than half believe their organizations are actually prepared to implement it. EY's data shows AI budgets rising sharply alongside persistent difficulty linking AI-driven productivity to realized value.

The adoption curve in private equity is no longer about whether AI is being used. It is about whether it is changing outcomes — and today, that distinction separates a small group of leaders from a very crowded middle.

2. Where AI Is Creating Real Value Across the Private Equity Lifecycle

AI value concentrates at the highest-friction points of the deal cycle — where data volume, time pressure, and judgment intersect. What follows maps every stage where firms are seeing real results.

At Lampi AI, we have identified and worked on more than +150 use cases for Private Equity.

Below are some concrete examples:

1. Origination & Deal Maximization

The problem: Most deal flow is intermediated. Proprietary sourcing is relationship-dependent and covers a fraction of the actual opportunity universe.

What AI does:

- Monitors companies across sectors, hiring signals, ownership changes, web traffic, or patent activity

- Surfaces targets that fit the firm's historical investment pattern — before they enter a process

- Flags inflection points: management changes, revenue acceleration, ownership transitions

ROI: 35–85% productivity gain in early-stage screening (PwC). More importantly: materially broader deal coverage without adding headcount.

2. Screening, Scoring & One-Pager Generation

The problem: Every target requires analyst time to produce a basic fit assessment.

What AI does:

- Filters, screens and scores targets against investment criteria: revenue range, EBITDA, growth, geography, ownership structure, etc

- Auto-generates structured one-pagers; including financial benchmarks, sector context, management overview, and thesis fit

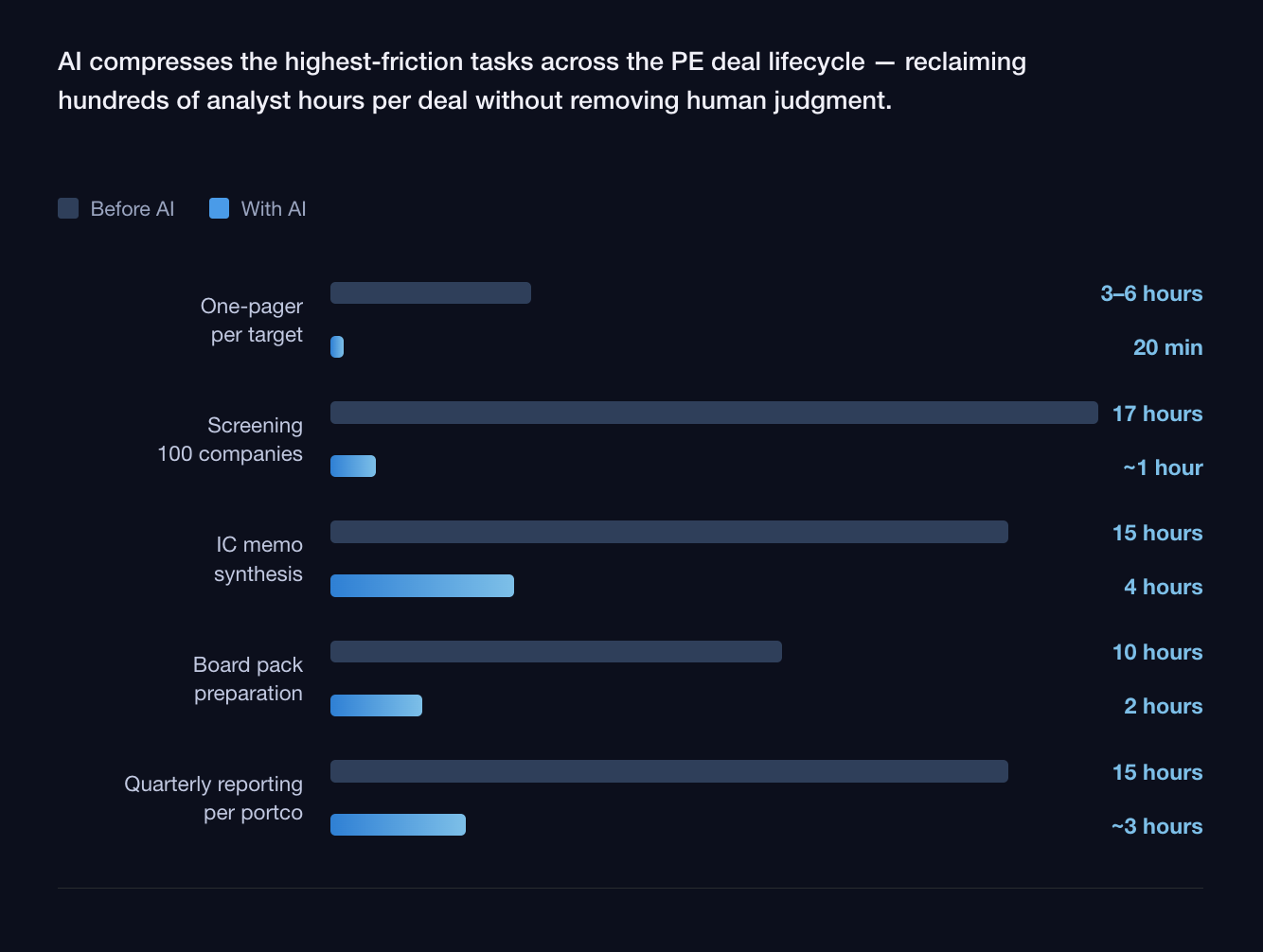

ROI: One-pager production drops from 3–6 hours to under 30 minutes. Screening companies can be also almost completly automated.

3. CIM & Business Plan Analysis

The problem: A typical mid-market analyst synthesizes 3,000+ pages of CIM materials per quarter. Competitive process timelines make thorough manual review physically impossible.

What AI does:

- Parses CIMs across all sections: financials, market overview, customer analysis, management bios, operational structure

- Extracts KPIs, flags inconsistencies between sections, surfaces sell-side narrative gaps, fact-checked market insights, etc

- Normalizes EBITDA, reviews revenue bridges, benchmarks adjustments against comparable transactions

- Generates a structured synthesis analysts can interrogate — not reconstruct

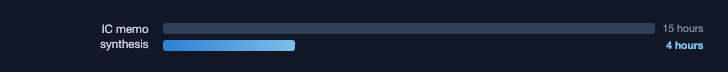

ROI: IC memo synthesis reduced from 15 hours to ~4 hours (PwC). At 20+ processes per year: significant reallocation of senior analyst time toward judgment.

4. AI Due Diligence

The problem: Data rooms contain hundreds (even thousands) of documents. Human review is sequential, fatigued, and increasingly biased toward confirming the investment thesis as the process advances.

What AI does:

- Reviews the full data room — categorizes, extracts, flags, summarize, etc.

- Surfaces structured red flags before counsel review (termination clauses, change-of-control provisions, concentration risk, covenant triggers, IP gaps, litigation exposure)

- Turns the entire data room into a searchable intelligence layer — any clause, obligation, or exposure can be queried instantly

- Produces structured risk summaries

ROI: AI-enabled diligence reduces review time from weeks to days. Document review at 600/month: 80–95% time reduction per document = ~5,400 hours saved annually.

5. LOI, NDA, Legal Risk & Negotiation

The problem: Between exclusivity and signing, documentation volume peaks and the cost of missing a clause is highest.

What AI does:

Document review / draft:

- NDAs and LOIs: flags non-standard clause constructions, unusual exclusivity terms, deviations from market norms

- SPA/APA: reviews rep and warranty scope, basket and cap structures, MAC definitions, indemnification mechanics — benchmarked against comparable transactions or templates

- Shareholders' agreements: drag/tag rights, governance provisions, anti-dilution mechanics, board composition

Negotiation preparation:

- Benchmarks debt terms against recent leverage finance precedents

- Gives deal teams a concrete evidence base in lender negotiations

Closing logistics:

- Capital call and LP notice compliance checked against fund documents

ROI: Primary value is risk reduction — catching a non-standard rep scope or a missing change-of-control trigger before close is worth multiples of the hours involved.

6. PortCo — Monitoring & Reporting

The problem: A 20-asset portfolio means 20 reporting packs, each requiring reconciliation and synthesis. Most firms operate with a 30–60 day lag between a problem emerging and a partner knowing about it.

Continuous monitoring:

- KPI ingestion directly from portfolio company systems or standardized templates

- Anomaly detection triggers alerts for: CAC spike >30%, churn acceleration, cash runway <6 months, margin compression, revenue concentration shift

Reporting & Compliance:

- First-draft quarterly letters, KPI packs, and narrative commentary auto-generated from portfolio data

- Consistency-checked against prior periods before partner review

- LP Q&A systems allow investors to query portfolio performance directly

ROI:

- Real-time margin monitoring protects an estimated 2–5% of portfolio EBITDA through early intervention

- Quarterly reporting automation: 15 hours saved per company per cycle. At 30 assets: 1,800 hours/year recovered — equivalent to one full-time employee

7. PortCo EBITDA — Revenue, Contracts & Operating Leverage

The problem: GP operational efficiency gains are finite. Portfolio company EBITDA improvement scales with enterprise value at exit.

Revenue side:

- Full customer contract portfolio reviewed: pricing terms, renewal mechanics, churn risk

- Sales funnel performance benchmarked against sector norms

- Customer segmentation: identifies highest-expansion accounts and concentration risks management hasn't priced

- etc.

Cost side:

- Supplier contract review: renegotiation opportunities, pricing anomalies vs. market rates

- Workforce structure analysis: identifies inefficiencies before they compound

- Operational anomaly detection: inventory, logistics, and process deviations flagged early

- etc.

9. ESG Screening, Scoring & Reporting

ESG has moved from a reporting checkbox to a diligence criterion, an LP expectation, and a value creation lever. AI operationalizes all three.

Screening:

- Pre-scores targets on carbon exposure, governance structure, supply chain risk, regulatory compliance history, and sector-specific ESG dynamics

- Flags material ESG risk before significant diligence time is invested

Diligence:

- Reviews compliance documentation, environmental permits, workforce practices, and governance disclosures

- Benchmarks against SFDR, CSRD, and LP-defined standards

Reporting:

- Generates ESG reports against ILPA, SFDR, and LP-specific templates

10. LP Reporting & Fund Communications

The problem: LP reporting is one of the most time-intensive workflows in PE and one of the least value-additive for the hours it consumes.

What AI does:

- Quarterly fund reports and portco updates drafted from standardized data inputs

- Narrative commentary generated against prior-period benchmarks

- Capital call and distribution notices automated against fund document templates

- LP Q&A intelligence: indexes fund performance data, portfolio reporting, and prior LP communications — answers LP queries with sourced, auditable responses without IR team intervention

ROI: 15 hours saved per portco per reporting cycle. At 20 assets on quarterly cadence: 1,200 hours/year recovered.

11. Exit Planning & Preparation

The problem: Firms that prepare earliest achieve the best outcomes. Most start too late.

Buyer identification & scoring:

- Maps strategic acquirers, financial sponsors, and infrastructure funds against portco profile and recent transaction activity

- Scores buyers by likelihood and strategic fit — updated as the process approaches

Management presentation preparation:

- Generates likely buyer questions based on company profile and sector dynamics

- Prepares management with structured responses, supporting data, and objection handling

Market context:

- Monitors sector sentiment and recent comparable transactions continuously

- Flags developments requiring narrative adjustment before the process launches

The examples of use cases above represent hundreds of hours per deal of synthesis, review, and drafting that currently consumes analyst and associate capacity better deployed in front of management teams, in negotiation rooms, and in portfolio operating reviews.

The right use cases are not uniform across PE strategies. Buyout focuses on operational EBITDA impact, supply chain optimization, and post-acquisition value creation. Growth equity prioritizes AI-driven sourcing and market mapping to identify high-growth targets before broader market awareness. Infrastructure uses AI for long-term asset monitoring, predictive maintenance, and regulatory compliance across multi-decade holding periods. Firms that deploy AI generically across their strategy, without calibrating to deal type, consistently underperform those that focus on the highest-friction, highest-stakes moments specific to their model.

2. The Challenges — Why AI Adoption in Private Equity Is Harder Than It Looks

AI can be considered as harder in private equity for reasons that have little to do with models and everything to do with the operational reality of deploying intelligent systems in a domain that doesn't forgive mistakes.

Numbers matter. A wrong revenue figure, a misinterpreted assumption, an incorrect DCF input — one miss on a $10M position and you've destroyed trust forever. That asymmetry shapes everything about how AI must be built and adopted in this environment.

The data problem runs deeper than fragmentation

Most PE firms assume their AI problem is one of access — get the documents in, get answers out. The reality is that financial data is structurally adversarial to machine reading. It was never designed for AI. It was designed for legal compliance.

A typical data room contains documents where tables span multiple pages with repeated headers, footnotes reference exhibits that reference other footnotes, and the same number appears in text, tables, and appendices — sometimes inconsistently.

Standard parsers fail on multi-column proxy statements, nested tables, scanned exhibits, and the chaos that older filings routinely contain. It requires more advanced and costly data processing that general-purpose AI tools are not deploying.

Data must be normalized. An adapted chunking strategy — how you break documents into retrievable segments — needs to be applied to determine whether the AI retrieves the right context or plausible-sounding noise. This normalization work is the unglamorous reality that separates AI deployments that work from those that look impressive in demos and fail in production. It is not a feature. It is a prerequisite.

Hallucination is a structural risk, not an edge case

Large language models (LLMs) will fabricate specifics when sources are thin, contradictory, or ambiguous. In consumer contexts this is an annoyance. In PE due diligence it is a liability that may not surface for 18 months.

Every AI output that feeds into an investment memo, a valuation model, or an LP report requires explicit provenance — which document, which page, which figure. Outputs without traceable citations are not just unreliable; they are unusable by any senior partner who has to defend the conclusion in a meeting.

This forces a design principle: AI in PE cannot be a black box. Every output must be reviewable, traceable, and overridable.

The model is not the product — and most firms are optimizing the wrong thing

Anyone can call an LLM API. The models are increasingly commoditized. What makes AI work in financial services is everything built around the model:

- the data normalization layer,

- the domain-specific workflow encoding, and

- the evaluation infrastructure,

This matters for PE adoption because the moat is not the AI — it is the encoded investment judgment. Firms that treat AI as a plug-in will remain dependent on generic outputs. Firms that systematically encode their own methodology into how agents operate will compound an advantage that compounds faster than the models improve.

Evaluation infrastructure is non-negotiable — and almost nobody has built it

Most PE AI deployments have no rigorous evaluation infrastructure. They test manually, episodically, and optimistically. A model update ships. A prompt changes. A new document type enters the workflow. Nobody knows whether accuracy improved or degraded because there is no systematic test suite measuring it.

The minimum standard for AI in high-stakes financial workflows is a domain-specific evaluation dataset — test cases that cover the actual failure modes of financial analysis: numeric precision, temporal ambiguity, cross-document consistency, and hallucination resistance. Every material change to the AI system should be measured against that dataset before it touches live deal work. Deployments that skip this step are not moving faster. They are accumulating invisible risk.

Trust is the rate-limiting factor — and it destroys faster than it builds

When an output cannot be traced back to primary documents and decision logic, it is not usable in an investment committee regardless of whether the number is right.

Trust in AI compounds slowly through repeated, explainable wins and collapses after a single high-stakes miss. This dynamic — not model quality, not integration complexity — is the actual rate limiter on AI adoption inside PE firms. Building it requires designing AI as a collaborative tool, not an autonomous one: the agent does the heavy lifting, surfaces the options, flags the uncertainties, and keeps the senior investor in control of every decision that matters.

Change management is the binding constraint

PwC's research is direct on this point: the binding constraint is people, not technology. While 54% of workers globally have used AI for their jobs in the past year, only 14% use it daily. The gap between awareness and embedded habit is where most PE AI programs quietly stall.

Associates and principals optimize for certainty under time pressure. Learning a new AI workflow takes longer the first several times and carries visible risk if the output is wrong. Without protected time, psychological safety, and visible modeling from senior partners, people revert to the familiar approach — faster today, slower forever. The deal process does not pause for AI onboarding.

Two clocks shape how organizations adapt to AI:

- The capability clock measures how fast AI capabilities improve.

- The adoption clock measures how fast your organization changes how it works because of those capabilities.

Most PE firms have let their capability clock run far ahead of their adoption clock — and the gap is where value gets lost.

Security and confidentiality are non-negotiable — and genuinely complex

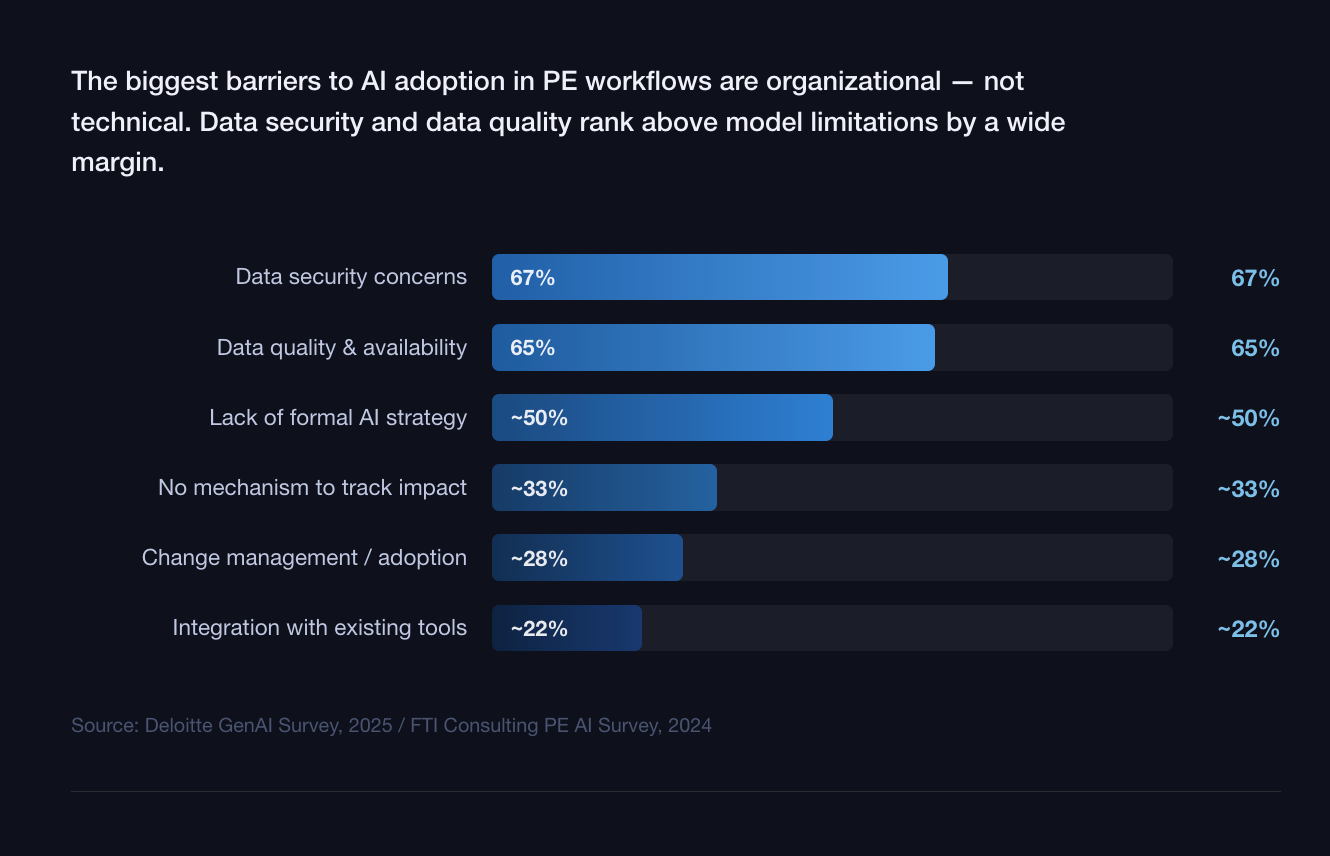

LP data, MNPI, and portfolio company information operate under legal and regulatory constraints that most AI deployments were not designed for. Access controls, data residency, retention policies, and audit trails must satisfy both internal governance and LP due diligence. Deloitte identifies data security (67%) and data quality (65%) as the leading barriers to GenAI adoption. That requires infrastructure-level enforcement, not application-level promises.

The talent gap is structural — and different from what firms expect

The gap is not data scientists. Most PE firms do not need, and cannot justify, an engineering team. What they need — and largely lack — are operators or partnership vendor who can translate investment judgment into repeatable AI workflows, maintain prompt quality over time, govern data inputs, and measure whether outputs are actually improving decisions.

This is a new role that sits between investment professional and technologist. It requires enough domain knowledge to know when an AI output is subtly wrong and enough technical fluency to fix the workflow that produced it. That person does not exist in most hiring pipelines, and building them internally takes time that deal pressure rarely allows. Also, general AI provider will not assist PE firms in AI integrations.

What this means for your firm: The winners are not the fastest adopters. They are the firms that invest in the unglamorous infrastructure — clean data, domain-encoded workflows, rigorous evaluation, right partnerships, and explicit human accountability. The model is the least important part.

4. Private Equity Perspectives

LPs are now evaluating AI maturity as part of manager due diligence

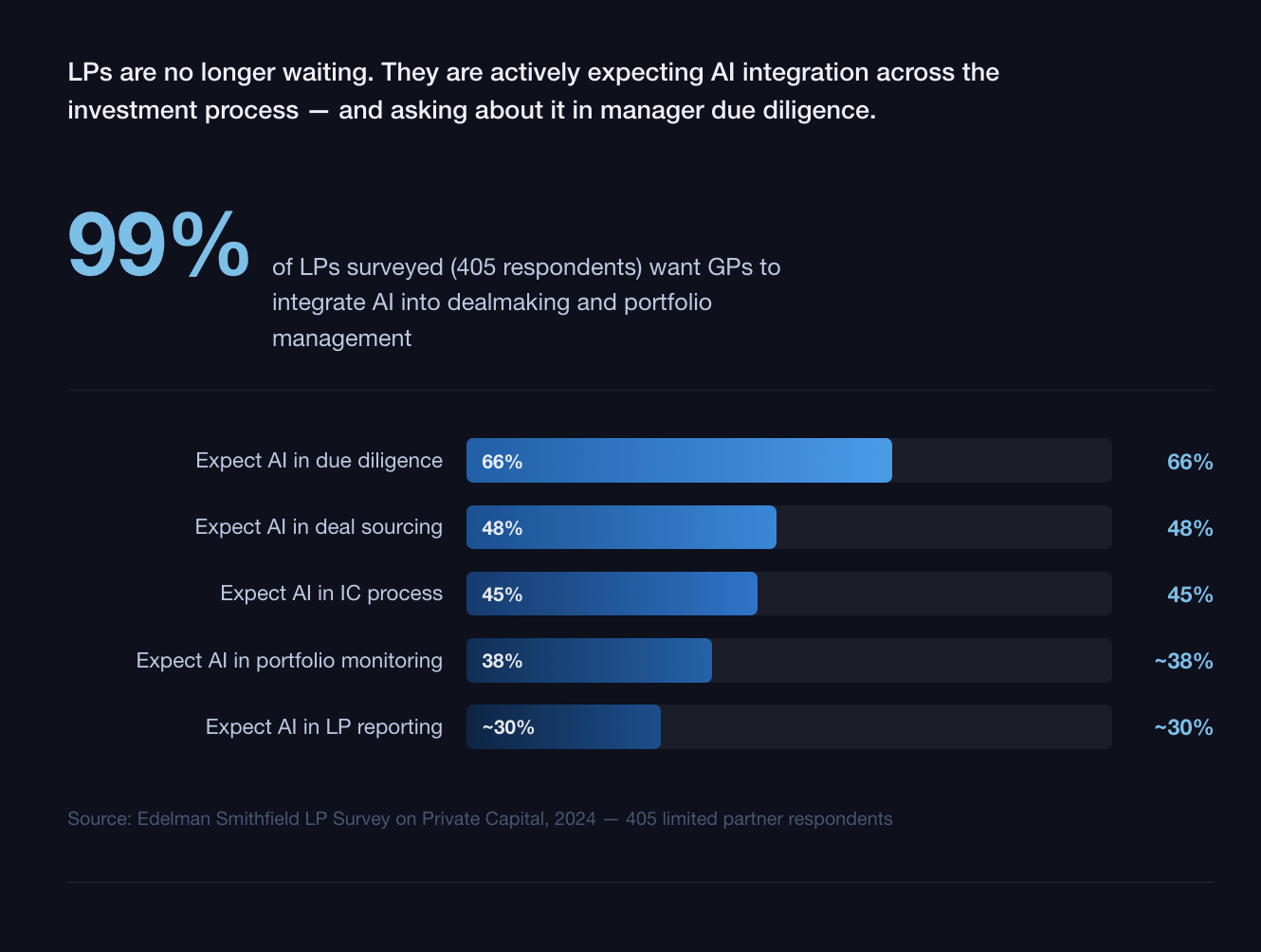

A LP survey covering 405 limited partners shows that 99% want GPs to integrate AI into dealmaking, with 66% explicitly expecting AI in due diligence, 48% in deal sourcing, and 45% inside investment committee processes.

Crucially, LPs emphasize efficiency and analytical support rather than autonomous decision-making — they want faster, more consistent analysis, not machines making investment calls.

In practice this is showing up as new diligence questions:

How is AI protecting and enhancing portfolio EBITDA?

What governance exists around AI outputs that inform IC decisions?

LPs are equally alert to "AI washing" — GPs claiming AI capabilities without any measurable line of sight to value creation. The risk of overstating AI maturity is no longer theoretical; it is showing up in LP due diligence questionnaires.

Portfolio company implementation is where ambition meets friction

Pushing AI into portfolio companies is where the "aspirational" collides with "practical."

Reports from MIT's NANDA initiative suggest that up to 95% of generative AI pilots at the company level are failing — typically not because the technology doesn't work, but because the prerequisites for it to work don't exist.

Real value creation requires six conditions to align: clean data, modern infrastructure, skilled talent, governance guardrails, cultural openness, and aligned use cases. In a mid-market portfolio company running on legacy ERP systems with a two-person finance team, those conditions rarely co-exist out of the box.

However, when implementation succeeds, the impact is concrete. Examples:

- A large hospital system automated claims appeals using AI, reallocating nursing staff and achieving approximately $2.4 million in anticipated annual savings.

- An engineering firm used AI to prioritize RFP opportunities, generating a 20%+ increase in win rate and a 60%+ reduction in review time.

The implication for operating partners: AI readiness assessment at diligence stage is no longer optional. The cost of inheriting a data infrastructure that cannot support AI-driven value creation is increasingly priced into post-close timelines.

The junior talent question is real — and unresolved

The most acute tension exists at the entry level. AI handles significant portions of what junior analysts have traditionally done: data gathering, document synthesis, memo drafting, etc.

The concern — raised consistently by senior partners across PE firms — is that by automating these tasks, the industry removes the repetitions through which investment judgment is built.

The emerging consensus is not replacement but augmentation: AI removes the friction of data processing but does not remove the need for human judgment in the final recommendation. The firms handling this well are deliberately preserving high-judgment exposure for junior staff — keeping them in management meetings, in negotiation prep, in thesis-building — while AI absorbs the synthesis and formatting work. The firms not handling it well are discovering that a class of analysts who never built the foundations of investment judgment is a more serious problem than a shortage of PowerPoint slides.

4. Where AI is Going for Private Equity

The next phase of AI adoption in private equity will not be defined by better models. It will be defined by how firms reorganize decision-making around machines that can observe, reason, and act continuously.

2025 - 2027– From AI assistant to AI agents and complex AI orchestrations:

We are shifting since 2025 to AI tools that answer questions and draft outputs to AI agents that execute workflows continuously. Agentic AI introducing orchestrator agents that coordinate multi-step processes – the move from AI-enabled analysis to AI-orchestrated workflows, where agents sense, decide and act across origination, diligence, and portfolio operations.

For more about AI agents, see:

By 2027, partial AI adoption will be a competitive disadvantage. FTI Consulting's 2026 outlook notes that firms are moving toward "increasingly complex AI orchestrations" spanning multiple workflows, supported by deeper data infrastructure and fund-level coordination. Isolated pilots will not survive contact with real deal pressure. AI adoption in private equity is beginning to look less like a technology program and more like an operating system for the firm.

AI readiness becomes a diligence criterion.

Private equity firms are beginning to evaluate portfolio companies — and targets — on their AI readiness. Not flashy models. Data hygiene, process instrumentation, leadership capability, and the ability to translate insight into action. Firms increasingly view AI maturity as a proxy for operational discipline and future scalability.

In practice, this shows up as new questions in commercial and operational diligence:

How clean is the data?

Where are decisions made manually that could be systematized?

Which value levers could be monitored continuously instead of quarterly?

The AI due diligence private equity practices of the next few years will evolve from document review acceleration to assessing whether a business can support intelligent execution post-close.

A barbell effect is emerging.

Adoption is bifurcating: technically fluent smaller managers can offset limited resources with accessible AI tools, while megafunds build proprietary infrastructure. Mid-market firms risk being squeezed unless they deliberately structure AI as an operating capability rather than a sequence of pilots.

The firms that navigate this successfully will be those that resist the temptation to wait for a cleaner, cheaper, more obvious solution — and instead build the operating discipline now, while the window to establish a differentiated capability is still open.

The talent model shifts upward.

Agentic AI does not eliminate humans. It changes which humans matter. As AI systems absorb coordination and synthesis work, the bottleneck moves up the organization. Fewer junior generalists will spend nights stitching slides. More value will accrue to senior investors and operators who can frame the right questions, interpret weak signals, and intervene decisively. Firms investing in AI fluency at the partner and operating-partner level see stronger adoption than those treating AI as a back-office or IT concern.

Governance and trust become differentiators.

As autonomy increases, so does scrutiny. LPs, regulators, and investment committees will demand clarity on how AI systems reach conclusions and where humans retain control.

The winners will not be the firms that give agents the most freedom — they will be the ones that design explicit human-in-the-loop thresholds: when agents can act, when they must escalate, and how decisions are audited. In private equity, trust compounds slowly and evaporates quickly. Governance is not a brake on AI adoption. It is what makes scale possible.

Leading firms will define explicit handoffs: where AI proposes, where humans approve, where overrides are required. Provenance, traceability, and explainability need to be built into IC-grade outputs from the start. The system needs to be designed to be reviewed.

5. Conclusion

AI adoption in private equity is no longer a question of experimentation. It is a question of operating discipline. The firms creating durable advantage are not those deploying the most tools — they are those redesigning how decisions are made, monitored, and governed across the deal lifecycle.

AI delivers value when it is embedded into workflows, grounded in clean data, and paired with explicit human judgment at the moments that matter. Where those conditions are absent, pilots stall and trust erodes. Where they are present, the compounding effect is real — and it is beginning to show up in the gap between firms that see more deals, close faster, and intervene in portfolio problems earlier than their peers.

Over the next few years, AI will increasingly function as infrastructure rather than augmentation — continuously observing signals, flagging risks, and prompting action between investment committee meetings. That shift will raise the bar for diligence, compress response times, and reward firms that treat AI as a core operating capability rather than a side project.

At Lampi AI, we build AI agents for private equity firms — purpose-built for the deal lifecycle. If you are mapping your firm's AI strategy or pressure-testing where AI can create real operating leverage, we would be glad to explore what is possible together.